Below highlights selected research projects that I have been involved in.

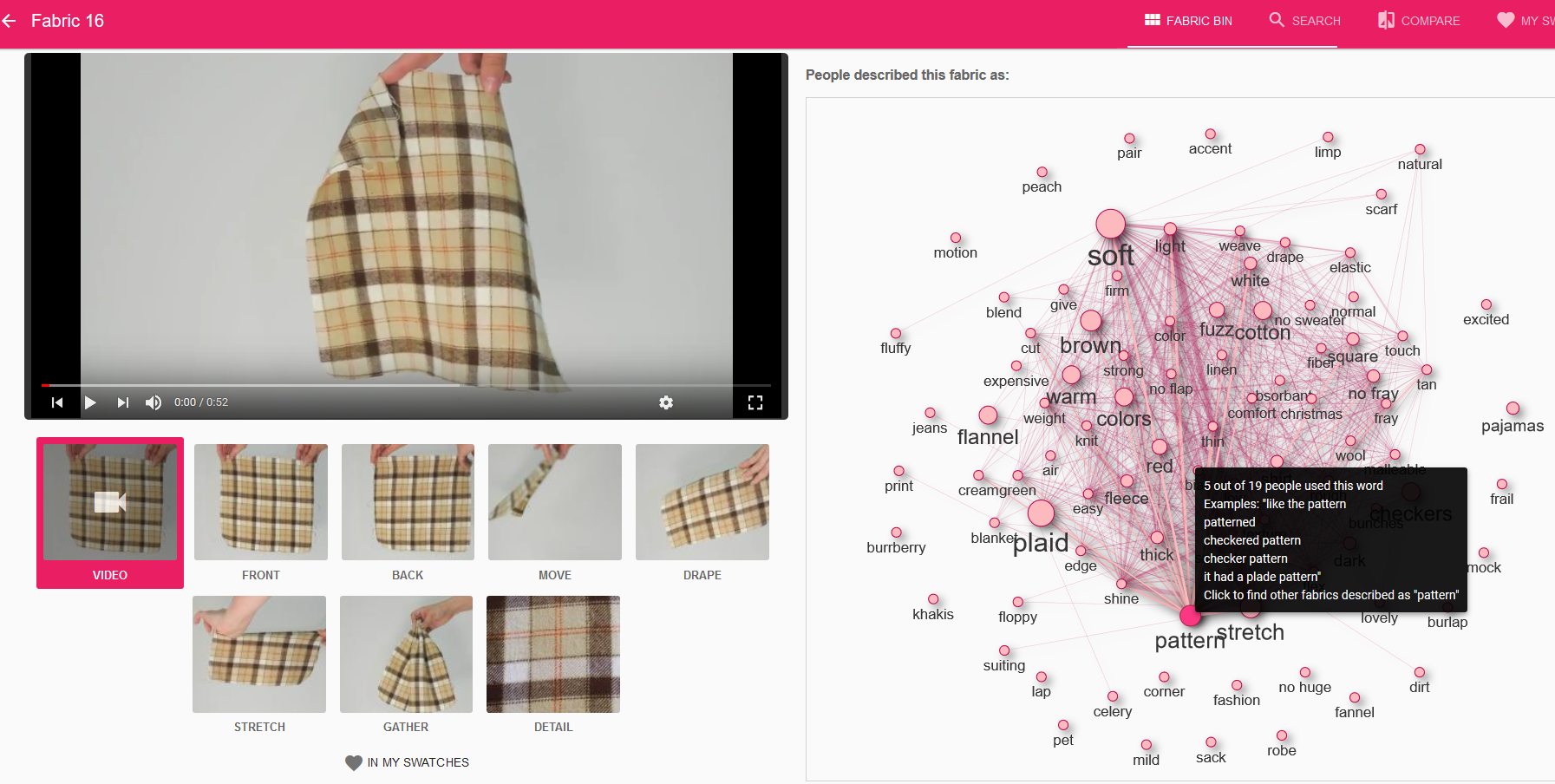

Negotiating Ambiguity in Exploring Materials Online

Designers use a myriad of words to describe a single piece of fabric, from contradicting words (“thick” and “thin”), unclear words (“light” in color or weight) and contextually specific words. How can we use this information to help designers explore materials online?

We launched initial studies into better understanding designers’ ways to exploring and describing fabric and how to crowdsource descriptions. Then I designed and evaluated a fabric exploration system that both shows images and video of the material, and user-generated descriptors that can significantly improve shopping systems broadly.

Relevant Publication:

Leal, A. “Negotiating Material Description Through Technology”. PhD Thesis

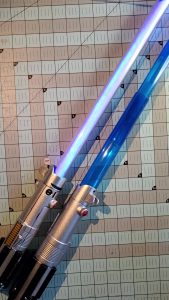

Sustainably building a Lightsaber during Crisis

The pandemic showed us the large gaps of technology and internet access across social-economic groups, from people working out of a car due to unreliable home internet, to students frantically buying a new laptops needed for class.

As an educator, I am keenly aware of students’ limited access to 3D printers, laser cutters among others.

This project is a series of trial and error, with the challenge of using inexpensive and accessible tools and methods. I also saved a lightsaber toy from the trash, giving it more awesome power and championing sustainability through reuse.

This build and resulting tutorial won Make Media & Nvidia’s May the 4th Maker Challenge. Here’s the award-winning submission and tutorial.

Fabric3D

We explored how fabric, a commonplace medium, can be used as a 3D input device to design surfaces, more specifically costume and garment design.

The below video highlights an early version of the system,and shows a sketch of how interacting with fabric could look like, and how we can use it for design.

Publications:

Leal, A., Schaefer, L., Bowman, D., Quek, F., and Stiles, C. “3D Sketching Using Interactive Fabric for Tangible and Bimanual Input.” In the proceedings of Graphics Interface 2011. May 2011. (31% acceptance rate)

Leal, A., Bowman, D., and Quek, F. “Innovative 3D Surface Design Using Fabric: 3D Tangible, Two-Handed Direct Input.” CHI 2010, at CHIMe Workshop & Grace Hopper’s Celebration of Women in Computing 2010.

Pixelbending

With our goal of developing novel and cool 3D interface, Andy Wood and I, advised by Doug Bowman, made a waterbending trainer app, where you control water using continuous, martial arts-like movements. Inspired by Avatar: The Last Airbender, we wanted players to fully control the water, not just -do a static pose- and some fixed burst of water shoots out. You can control anything from the intensity, speed, and angle of moving water, depending on how you do the martial arts-like gestures, using a 3DTV, a Microsoft Kinect, and Unreal Development Kit.

Symposium of 3DUI Grand Prize 2010

The Symposium of 3D User Interfaces held its first ever contest. The basis of the contest was to pick small objects in a large supermarket. We won 1st prize live contest!! Below is what we developed:

Publications:

P. Figueroa, Y. Kitamura, S. Kuntz, L. Vanacken, S. Maesen, T. D. Weyer, S. Notelaers, J. R. Octavia, A. Beznosyk, K. Coninx, F. Bacim, R. Kopper, A. Leal, T. Ni, and D. A. Bowman. 3DUI 2010 contest grand prize winners. IEEE Computer Graphics and Applications, 30:86–96, 2010.

Chatty Wheatley: Multi-sensor plush toy

As a project to build innovative interactions with electronics in physical computing, Yasmine El-Glady and I built Wheatley, inspired by a ball-like robot character from the hit game Portal 2.

As a project to build innovative interactions with electronics in physical computing, Yasmine El-Glady and I built Wheatley, inspired by a ball-like robot character from the hit game Portal 2.

Using an arduino, audio shield, tilt sensor, ultrasonic sensor, accelerometer and more, Wheatley can chat with you as you walk by him, say goodbye, and react when he gets carried or set down. He also randomly talks to himself to keep himself company when he is alone.

This project wasn’t only an electronics-heavy project. It involved programming and processing and design work in making the plushie.